I wrote about the CEFR framework a while back, explaining how I thought it was misused in many EAL contexts. It’s been on my mind again recently, so I just wanted to reiterate some points and add a few more. Here are some practicalities worth considering when it comes to analyzing learner data based on CEFR testing/assessment:

⁃ The CEFR framework provides proficiency levels of best fit. A learner ‘working at B1’ can typically demonstrate competence across a range of B1 level descriptors, though they may be working at A2 in some areas, B2 in others, etc.

⁃ I haven’t yet come across a coursebook that aims to adequately cover all the modes of communication used in the CEFR (mediation is the one often lacking), or one that comprehensively addresses descriptors related to plurilingual/pluricultural competences.

⁃ Placement tests such as CEPT provide a snapshot only – they are not comprehensive. Tests based on KET, PET, etc focus mainly on general language development – they are not ‘academic language rich’ and don’t necessarily have a real-world slant. There are placement tests like Versant (linking to Pearson GSE and the overall PTE Academic) which do attempt more ‘real-world’ language use by integrating multiple skills. This test is not without it’s own flaws though, and is based on an extended set of descriptors beyond CEFR. I’ll do a write up on GSE/PTE Academic another time as it’s an interesting one!

⁃ CEFR bands are not equal distance units – progress may slow as challenge increases; it can be influenced by other factors (L1 literacy, motivation, etc); different skills may develop at different rates. With this in mind, use of ‘mean value-added’ analysis to assess the progress of a whole class should factor in that the expectation is not *every learner should be expected to move up at least one level per academic year*. Keeping CEFR levels as equal distance units is, in many cases, wholly inaccurate.

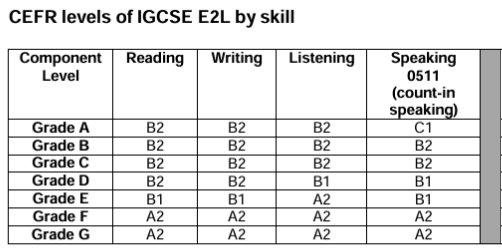

– If learners are studying towards a qualification like IGCSE ESL, the mapping of course grades against CEFR clearly demonstrates that learning is expected to slow as challenge increases. In the example below, one-grade increases in Reading from F-D cover A2-B2 levels, then Grades D-A demonstrates various levels of competence working within B2.

– The often-cited estimate that it takes 200 guided learning hours for learners to progress one CEFR level is not guidance from the Council of Europe (who produced the CEFR). It’s a figure attributed to Cambridge English and Assessment, and they carefully hedge that this depends on a range of factors. Arguments suggesting that in EMI contexts, learners can and will progress faster, seem intuitive. However, there are multiple factors at play here too, not least how well Tier 1 universal support is embedded to ensure learning across the curriculum is accessible.

⁃ Sometimes, CEFR level tests are administered to a whole year group, and the data comes as a shock – ‘the intensive EAL group outperformed learners in mainstream classes’. In that case, could the intensive programme better primed learners for that particular test? Is comparison always fair? What do we learn from testing a whole cohort anyway?

⁃ The CEFR is a descriptive (qualitative) tool often used in a quantitative way. The most accurate form of assessment for the descriptors is often observing learner performance on a real-world task. Many assessment tasks in pre-packaged resources aligned to the CEFR are not really ‘real world’, or are a poor proxy for real-world academic tasks. Examples include tasks like writing an informal email to a friend (unlikely), 160-word essay/report/review (uber-concise, unrealistic), tag-on unit projects, and so on.

None of the above is a criticism of the CEFR – but it does provide some examples of how the CEFR can be misused or its results manipulated.

Categories: General, reflections, teacher development

Leave a comment