Jane Maria Harding da Rosa at EELT asked if I had any tips regarding effective questioning. She felt that effective questioning is not covered much on initial teacher training courses, so is planning some input on it for her blog/channel (see here).

I have nothing groundbreaking to say tbh, but I did do some mini-CPD on this in my last job. Here’s a summary of a few things I mentioned. Sorry for the naff presentation slides – it was a busy time and I had to throw stuff together quickly!

(Note, this input ran alongside some CPD for all teachers related to targeting techniques like cold-calling, bounce-pass, no opt out, etc. I focused more on question types and challenge here, I guess)

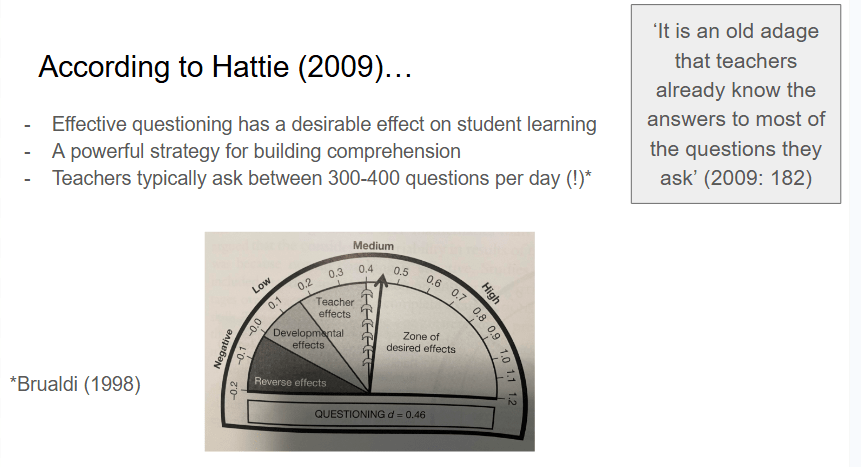

On the importance of effective questioning – here’s Hattie…

Mainstream teachers seem to love a bit of Hattie so I thought it was a good route in. BTW, If you want to read a good critique of his research…

I wonder where Brualdi got that stat from. I mean, I typically ask *myself* 300-400 questions per day (‘Why am I such a bad teacher?’, ‘What on earth was I trying to achieve with that task?’ etc) but not sure I get close to asking learners the same amount. I should, clearly!

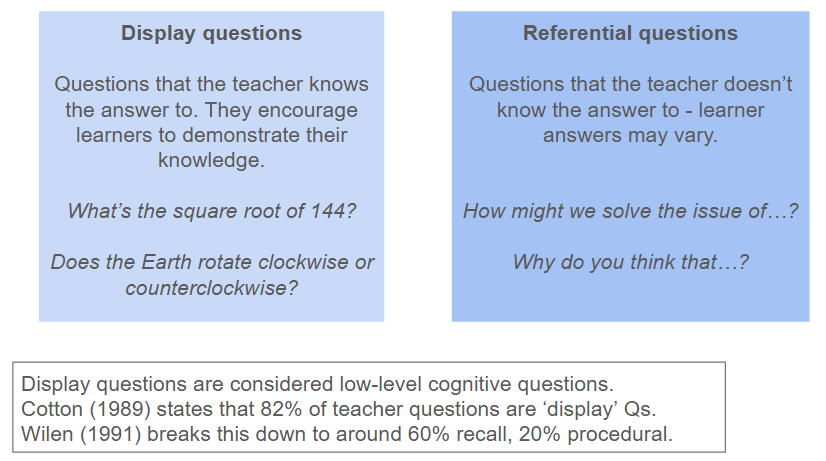

Anyway, that old adage about teachers asking questions to which they know the answer led to a summary of display vs referential questioning:

I’m not sure where I dug the research out from, sorry. And it’s a bit dated. Moving swiftly on…

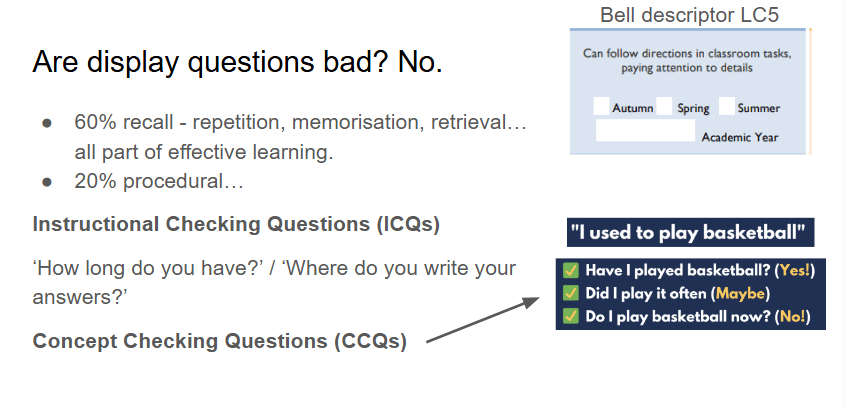

Some might assume that display questions are bad. Not at all. They’re useful for quick recall, checking instructions, checking concepts, etc:

… and therefore for formative assessment – when you look at the Bell Framework, learners are assessed on their ability to follow instructions and pay attention to details, etc, so ICQs can help establish that (as can monitoring of course!).

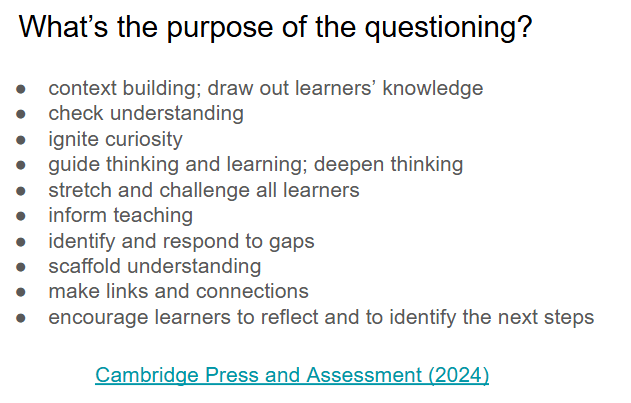

Some purposes of questioning are mentioned in this awesome summary article from Cambridge Assessment. Here’s some of what’s mentioned…

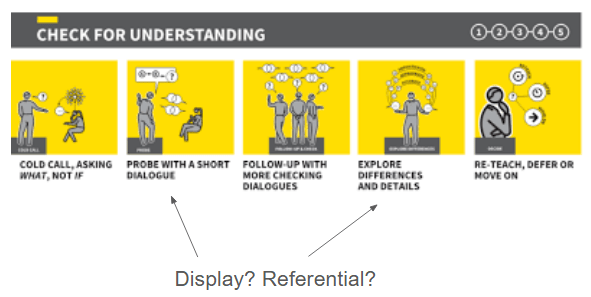

And when it comes to certain teaching moves, like this one for checking understanding (from Walkthrus), you can see how varying the type of questions you ask might fit in…

No hard and fast rules of course, I was just getting teachers to be more aware of the types of questions they might need…

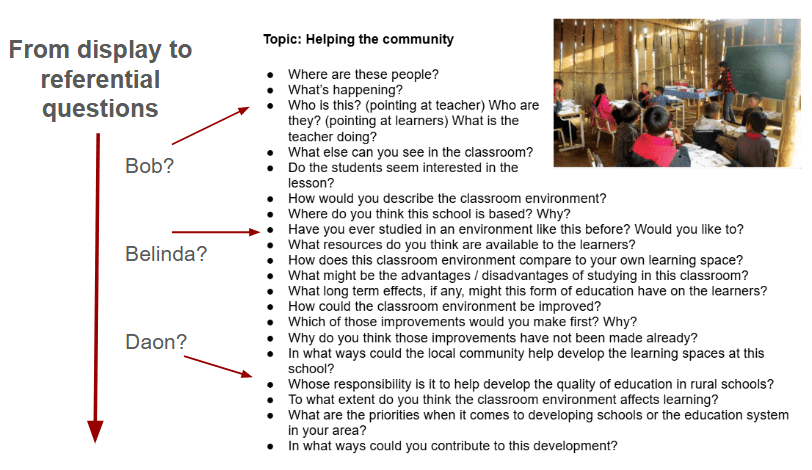

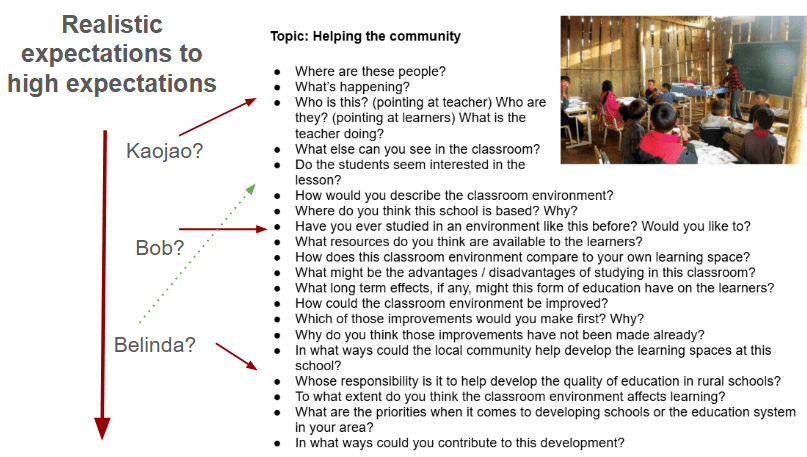

To make this clearer, I took a list of questions moving loosely from display to referential. I highlighted (using learners that our teachers would know) the type of question I might select for certain learners.

I realise you might be thinking ‘well, yeah, more challenge for more proficient learners is obvious’ or something like that. But actually, based on my experience as a support teacher in subject classrooms, it’s not!

Some teachers use lots of display questions for quickly reviewing previous learning – and they often ask these to more proficient learners to keep pace. They might then skip the more exploratory questions so they can move onto new content. This is understandable in high-coverage contexts (and in judgey, observation-heavy contexts), but it’s a missed opportunity for formative assessment or deepening understanding of certain contexts. A reminder of the importance of going beyond the these short display questions is important, especially in the context of language development.

And it’s important to experiment with different question types for different learners, giving them a chance to express themselves in greater depth.

Example 1: Bob might benefit confidence-wise from answering short display questions – he’s getting used to sharing ideas quickly in front of the class. But we don’t want to hold Bob back, we can try more complex questions now and then to see how he manages, and perhaps what scaffolds he might need in order to answer effectively. Our questioning may then inform our teaching input.

Example 2: This works both ways – maybe Belinda is finding a certain topic tough and feeling pressured from answering more exploratory questions – she just wants to ‘know something’ now and again, hahah.

It’s about empathy and reading the room, I guess. Sounds easy, but you never really know what’s gone on earlier that day and how that has affected learner mood/confidence, which in turn can affect their willingness to participate, focus, etc. That’s why I say always allow opt out.

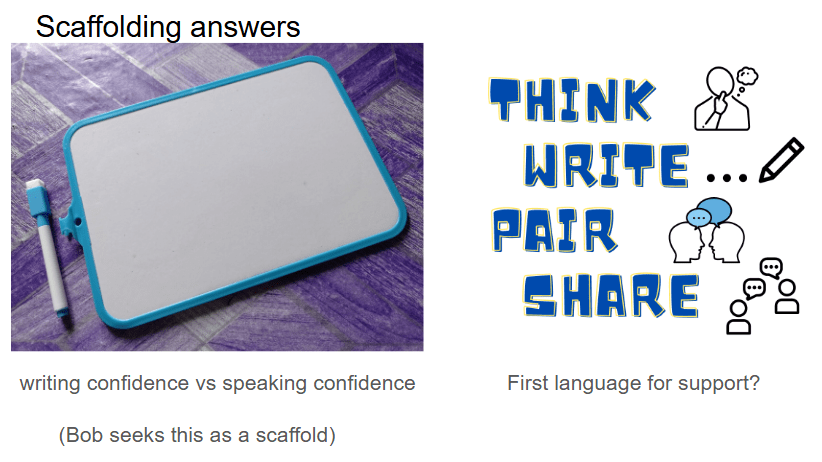

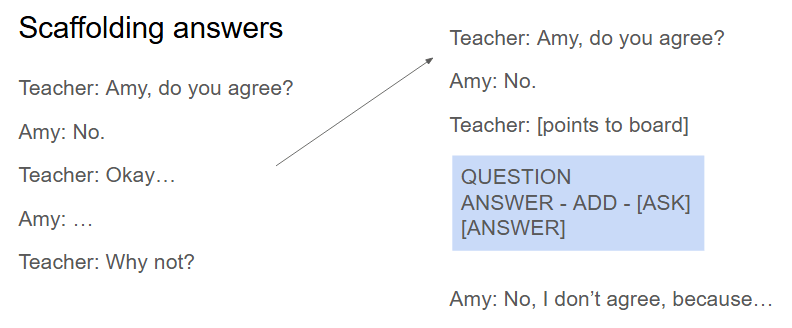

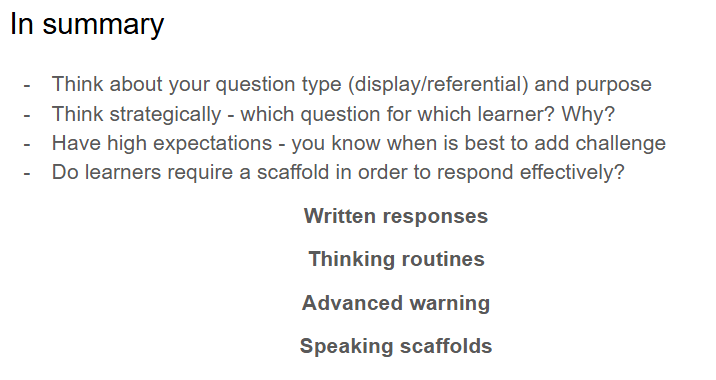

About those scaffolds (many of which I mentioned in that training for Sunderland last week anyway)

I mentioned one particular learner (Bob) who clearly benefited from preparing written responses before sharing. Use adaptations like Think-Write-Pair-Share – if learners don’t feel confident enough to speak then they can still demonstrate understanding either way.

And the importance of thinking time too – the research from Rowe is dated but the figures have a big impact so I still use it:

And the advanced warning that I’ve mentioned a lot before too:

And flagging active listening during questioning too – relating that to the Bell Assessment Framework again…

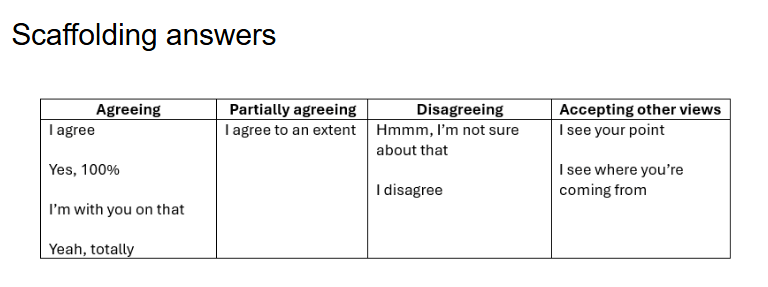

Providing support for output, like these phrases for agreeing/disagreeing.

Making interaction patterns clearer to learners, like highlighting that they should extend their ideas:

So these were the basic tips I shared for everyone:

Which was just a 15-minute CPD slot. However, at the time I delivered this, there was a huge rise in teachers using AI for creating lesson resources, which often included questioning (such as comprehension questions to accompany a text). I added in a quick 5-minute segment which harped back to input from the DipTESOL…

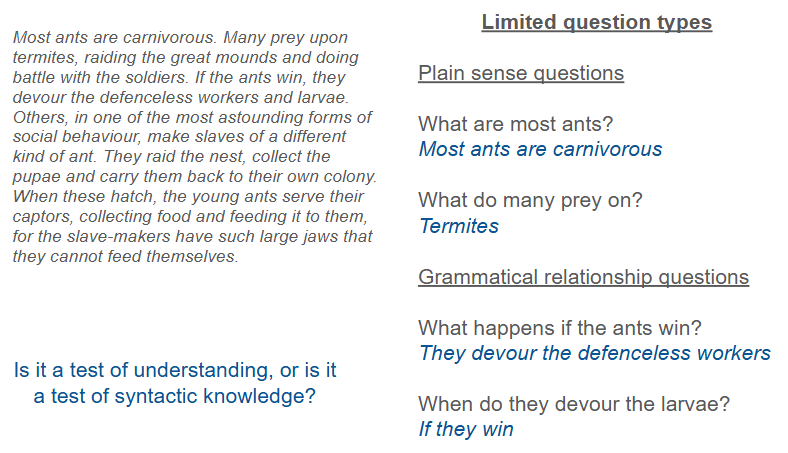

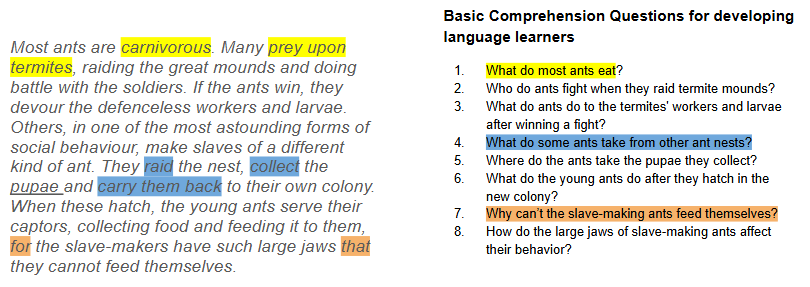

Basically, I highlighted how I’d noticed some teachers were using a lot of plain-sense and grammatical relationship questions:

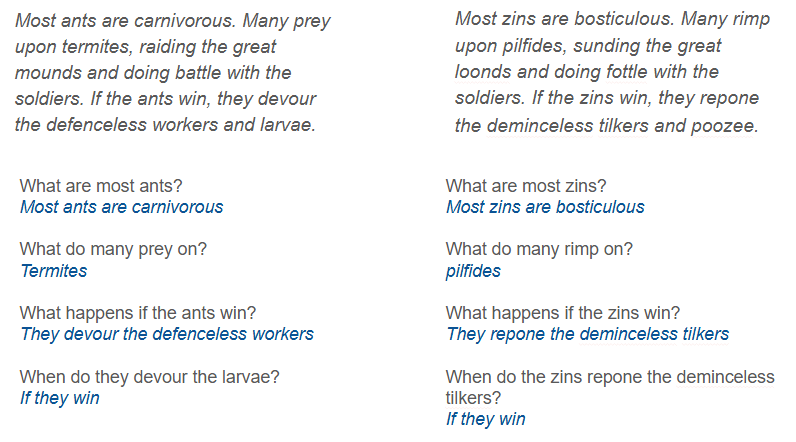

I’ve mentioned this example before on this blog somewhere and realised it was worth drawing attention to. I highlighted how many of the questions used may not actually be testing comprehension – as you can see by when you replace key words with nonsense words and you can still answer the questions:

(I can’t remember where the original text came from tbh but it was input on my DipTESOL)

I just reminded teachers to be a bit tighter with their instructions when asking AI to create texts and comprehension questions:

For each of the highlighted questions, I showed how there are less obvious clues (What do ants eat? Ants eat…) and more of a need to understand synonyms, cotext, and understanding a wider variety of language patterns (e.g. ‘for’ for ‘because’ in Q7).

Given more time, I’d have gone into this in detail and provided a prompt sequence to show how you can get AI to make tighter resources. However, my main point here was simply for teachers to actually review output in more detail, do the tasks themselves, and check the level of challenge. Tbh, I’ve found the output from AI so much better since mentioning this, or perhaps we’ve all got better at using it. Anyway, this does still relate to questioning, so I’m mentioning it here!

So, that’s a bit on questioning, but it’s just scratching the surface. What other advice would you give teachers when it comes to effective questioning? And do you think this input was too straightforward for teachers, or an area worth addressing?

Cheers

Categories: General, reflections, teacher development

Leave a comment